Inside Intercom's AI-Native Journey: Brian Scanlan

Reaching >3x PRs per R&D FTE

Upcoming Roundtables in London

May 6th: AI-Native GTM with Clay

May 20th: Pydantic x Glyphic Engineering Night

May 28th: Inference Stack Innovation with Crusoe

Intercom is arguably one of the most successful examples of a SaaS company becoming AI-native. They declared Code Red early after ChatGPT launched and went all in on Fin, their AI agent for customer support. Since then, the company has started publishing a blog charting their journey to become as AI-pilled as they come.

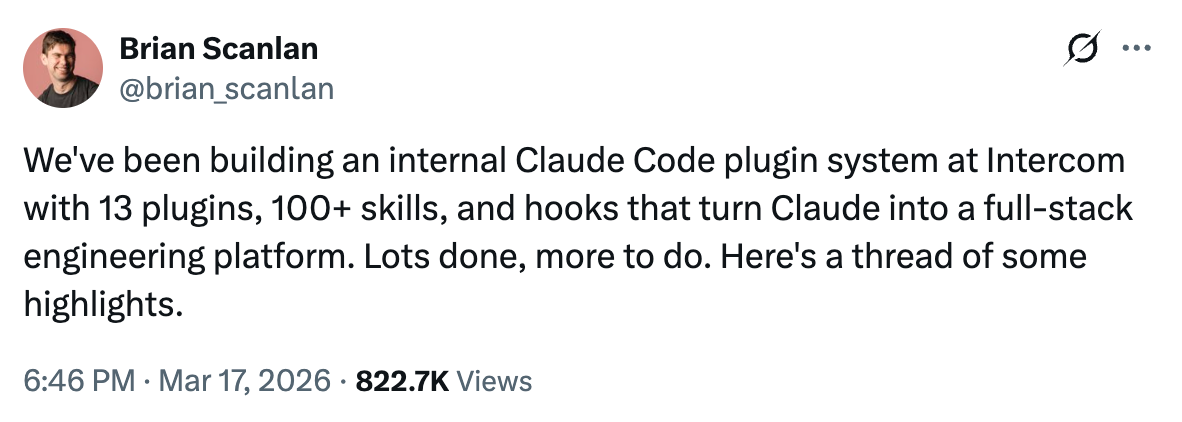

Brian Scanlan, Senior Principal Systems Engineer, went viral when he gave a peek into Intercom’s Claude Code tooling.

I had the chance to speak to Brian about how Intercom is changing hiring practices, instrumentation to measure the right metrics, consciously investing in Anthropic as a platform, org design, code quality and graduating from tokenmaxxing to seeing high quality PRs per engineer inflect. You can read some of the Intercom writing referred to in this interview here and here.

Talent and the interview process

You built a console where Claude can execute arbitrary Ruby against production data, and you mentioned the top-5 users of the read-only Rails production console aren’t engineers. They’re design managers, support engineers, PMs. How has that shift changed how you think about what you’re looking for in talent, and how has your interview process changed?

We’ve always looked for people who are flexible and product-oriented. Intercom is a product-oriented company, the founders are product people, and the name of our engineering role is product engineer. Until recently we even had a product component on our engineering interview. The same is true of designers and PMs: we want them close to reality, whether that’s users, code, or whatever else. There’s a great tweet from our head of product where he basically states that nothing else matters apart from code in production. I love that coming from a head of product, because you might expect them to be focused on plans, roadmaps, Gantt charts, and designs. Instead, what he cares about is how fast we’re getting real features and real code into production.

So this is more of a slight evolution than a reinvention. We’ve always been tech-agnostic. We’ve never just hired Ruby on Rails engineers, and we’ve been pretty liberal about hiring people from diverse backgrounds. I came from Amazon, for example. What we interview for is the ability to teach, learn, and change, the ability to be open-minded, more than five years of any specific stack or the aura of having worked at Google.

The tweaks we’ve made are around openness to AI. Most people showing up in our loops these days have seen what Intercom is doing and want to be part of it, so it’s not usually a problem. But we still get people from environments that have had zero AI exposure, or from more traditional backgrounds. Their enthusiasm and willingness to change is what matters. We look for openness rather than five years of cloud experience.

Looking forward, we expect design, product, and engineering roles to continue to converge. There’s still incredible value in depth across all these areas, but we now have product engineers doing full product management of features, designers shipping real code, and PMs shipping code as well. It’s not just box-checking. It’s about reframing those roles to look more like a builder role rather than a member-of-technical-staff role, rather than “I’m a designer and I hand stuff off to engineers.” We’re already well set up for this; the trajectory is just more and more towards everyone being able to do everything.

Measuring what matters in Claude Code adoption

You’ve instrumented every Claude Code lifecycle event with OpenTelemetry. This essentially treats Claude Code like a product you’re iterating on internally. What metrics do you actually look at to decide whether a skill is working, and how do you avoid the trap of measuring activity instead of impact, e.g. tokens spent, which can be a very poor proxy for value creation?

There’s no shame in going through a journey of adoption where, at the start, you’re just looking to force people to try things out, to token-max. The opportunity cost is so big, and the amount of change you’re trying to drive through people and the organization is so large, that it’s perfectly fine to have a slightly immature initial phase where everyone has to try this and use it as much as possible. Yes, you’ll waste a bit of time, but what you’re really getting is people becoming comfortable with the tools, figuring out where they work and where they don’t, and where you need to invest. You’re putting pressure on the system. If you’re too careful, or chase the perfect measurements too early, you end up learning lessons later than you should have.

One of the ways we’ve historically built at Intercom is to do the harder stuff early. In a lot of project plans, you load the start with easy work to manufacture the illusion of momentum, and the gnarliest, hardest parts get pushed to the end, which is also when you find out whether the thing actually works. We flip that. We want you to hit the hardest part first. We want you to be hungry for the learning of “does this truly solve the problem” rather than burying it.

So with token-maxing or output measurement, you have to first open people up to change. That means, “just go use it for everything, doesn’t matter how good it is.” You need to encourage that initial use: race people against each other, run a crappy leaderboard, gamify it. But you don’t confuse that with outcomes, which is what you ultimately care about. You go through a juvenile phase deliberately, because gamifying the trial is the easiest way to get people to test it across everything and figure out where it works and where it doesn’t.

Build vs. buy in an AI-native infrastructure stack

You’ve got 13 plugins, 100+ skills, and a JAMF-managed marketplace. That’s a lot of internal infrastructure to maintain. I’m sure you’ve been able to rip out some SaaS apps as a result. How do you make the build-vs-maintain tradeoff as a company?

Historically, we’ve had an engineering principle that we are technically conservative; we bias towards buying over building. We want people focused on shipping product rather than everything else, and we lean heavily on vendors rather than building expertise across the board.

That has changed somewhat. One of the biggest things to take off at Intercom, arguably bigger than Claude in software development, has been the adoption of Claude Code with a well-set-up suite of MCPs and access controls across the rest of the company. What people have ended up using it for is building their own sales dashboards, customer health dashboards, and highly granular customer-specific reporting, usually someone in sales or someone driving these things directly. What this has effectively replaced is business intelligence tooling and the likes of Tableau.

We didn’t deliberately set out to replace Tableau. We’ve just had a kind of bottoms-up product-market fit for this way of working, and we couldn’t go back to a hosted platform or a more traditional system. Once people have great, well-controlled data, good guardrails, and good places to host reports or small applications, the need for a centralized purchase of something like Tableau diminishes.

So I wouldn’t say we’re consciously trying to rip out big pieces of software, but the need for smaller purchases (role-specific workflows, reporting tools, that kind of thing) is shrinking. We’re probably purchasing far fewer of those, and we’re likely on a path to replacing things like Tableau over time, but very much bottoms-up. We’re not doing top-down “let’s vibe-code a Salesforce replacement today.”

The harness around Claude Code, and the role of the human engineer

The Claude Code hooks you’ve imposed to enforce adherence to your PR workflow and approved tools are great examples of how you’re building your own harness around Claude Code to get maximum value. What other best practices have you had to follow to make Claude Code more reliable over time, especially as we enter the age of background agents? And how do you see the role of human engineers evolving to best enable coding agents?

We’ve had to strengthen the guardrails substantially and provide a lot of guidance, and we’ve socialized this problem heavily. We tell people: we are all part of the flywheel, and we expect everyone to be part of it. I’ve written an engineering AI principle that says all technical work is becoming agent-first. In practice, that means you should be using an agent for all technical work, and when it doesn’t get things right perfectly every single time, your job is to notice and do something about it.

What that has meant in practice: more linters everywhere, making patterns in our codebase more standardized, and writing down explicit guidance for Claude Code along the lines of “if you’re going to do X, use these paths.” We have the headwind of being a 15-year-old SaaS with all sorts of legacy in our codebase, patterns we’ve long since moved past but that still exist. We have to explicitly tell Claude about the modern way. What we do with humans is essentially the same: we onboard people by showing them how to get stuff done, what good changes look like, and which parts of the code to ignore as the old way. The pairing and unblocking process for Claude Code mirrors human onboarding.

Beyond socialization and guardrails, we’ve found that skills, currently the main unit of execution, need to be extremely small. Small, testable, provably good. Skills need to do the full job, because it’s very easy to build a skill that looks like it’s doing a good job but isn’t, and a bad skill is almost indistinguishable from a great one at first glance. The hard part is really pushing on what great looks like: fast, accurate, gets it right first time, tested against all the use cases and edge cases, something you can stand over.

We have a continual flywheel built into pretty much every skill. If something happens that suggests the skill should be updated, the instruction is to update the skill. Skills are self-improving as they’re used. It’s easy to go expansive and build opinionated, monolithic skills that try to do a hundred different things, but I think it’s similar to writing code: you want small, testable units that do one thing well and are understandable.

The quality bar has to be extremely high, because the worst case is you spend a lot of time building automation, everyone is very enthusiastic, but the skills aren’t reliable, and then people stop using them, ignore them, bypass them. People just want to get their job done. So we run a small number of extremely high-quality skills rather than a large volume trying to automate complex, sprawling work.

Model lock-in and platform optionality

In terms of building infrastructure around Claude Code, are you building in a way that allows you to port your harness onto Codex or other providers easily, or are you developing switching costs as you invest more into enablement of Claude models specifically?

We knew this when we decided to go all-in on Claude Code. It was a slightly easier decision in December because Opus was ahead, but we knew the frontier wouldn’t always be in one place. Maybe Opus isn’t the best for Ruby, maybe not the best for security, maybe Codex overtakes it on cost. We accepted that.

But this is a platform play. We don’t choose multiple cloud providers and say “Google has the best blob storage, Oracle the best MySQL hosting.” You get the most benefit from a single platform and the compounding benefits of a system that works together. Vibes-wise, Opus may not be the best right now, and you could argue benchmarks back and forth, but I’m not too worried, because we get more benefit from being on one platform and being able to prove we can reproduce work at extremely high quality.

If Anthropic shut down tomorrow, I don’t think it would be a gigantic task to move to Codex, or even to roll our own to a degree. It’s likely a single Codex session away. We do already use Codex in our environment, not as a general-purpose agent but for things like code review and reviewing the output of another agent. Using two different models for meta-analysis is a perfectly good approach to gaining more confidence in code quality.

So we’re being pragmatic, but we put a lot of weight on the fact that it’s hard enough to get one system to work well. It’s not worth trying to get everything working at the level of quality and repeatability we want across multiple providers. That doesn’t mean we won’t change providers in the future. Capabilities are converging, and the providers are all moving towards the same shapes. I’m not too worried about lock-in, but I’d be worried if we were trying to be agnostic for the sake of it. It’s like multi-cloud: you need really strong reasons to go multi-cloud, because you miss out on the benefits of going deep on one.

Org design in an AI-native company

How has your org chart changed in the last 2 years, in light of how many AI-native companies are operating as very flat structures?

In the last three years, yes, a lot. We went through a phase, like many other companies, of fewer middle managers, a higher ratio of engineers to managers, and updated expectations of managers to be more hands-on, more leading rather than just facilitating. That was happening in the post-ZIRP era at Intercom.

Then, through the building of Fin, Intercom went through dramatic change. We put 80% of R&D on Fin, moved away from our traditional help desk (we’ve since invested back in it, which is great) but we made very dramatic decisions about shifting people off the help desk into effectively greenfield areas. We dropped our tried-and-tested product team format and moved to what we internally called experience teams, more like factory project teams, way more execution-oriented, none of the baggage. We staffed them almost exclusively with tenured people who knew how to get things done inside Intercom, leaving traditional onboarding to other teams. Those have been really successful, both for focus and because it’s the right model when you’re building with AI: you need to be able to remove baggage, get people out of the way, give them a mission, and let them go.

We’re not as advanced as Anthropic, where there’s more of a research-lab flavor. We’re still a product company that wants to ship features on the roadmap. Sometimes we do experimental work, sometimes we don’t know if a feature is buildable, but we haven’t fully melted into a managerless setup.

The role of planning has changed significantly, though. We’re not doing big roadmaps anywhere now; you can barely plan six weeks out. We used to set high-level roadmap goals every three or six months and then figure out what to build in six-week chunks. Now we set goals every six weeks and figure out what to build, and that can be replanned within days. So planning cycles have shifted dramatically. The teams still exist, but the format, expectations, and scope of what people work on have changed significantly. We just haven’t completely broken up the org.

Measuring code quality

In your recent write-up reflecting on how far you’ve come, you mentioned using static analysis and various heuristics to measure code quality. Can you elaborate on that?

We’ve been working with a group at Stanford doing research on the impact of AI on engineering. We gave them access to our GitHub and they’ve been pulling data. It’s something we care about. We’ve always had a strong testing culture and well-understood patterns. We write code in well-understood monolith applications, so we know how to get most things done. I worry more about software architecture than the code itself.

We initially saw code quality start to decline, despite having more linters written this year than ever before and more tests written than ever before. The fundamentals had improved. We’d raised the bar on multiple quality metrics by putting AI on those problems and getting more repeatable software production by guiding Claude. But the Stanford research did indicate that quality was slipping in a few directions: greater complexity, some rework.

Then something turned a corner, and this was only in the last six weeks or so, when we happened to look back and check in. To a degree we’d already accepted that doubling engineering throughput might come with a small dip in quality; we wanted the acceleration. But today, code quality is increasing every single day. The overall quality of the codebase is going up. That’s a testament to the detailed work we’ve been doing on inspecting output quality, but also to things like our automatic approval process.

The automatic approval process basically says: if you produce a pull request that’s what you probably should have been creating anyway (small, using feature flags, with code observability, single-purpose, not in dangerous code paths) it gets auto-approved. People are bending their work towards the path of least friction, which is exactly where we want them to go. We use an LLM judge with all of our domain knowledge about what a great Intercom pull request looks like, and we’re now producing more of these than ever.

All those years of telling people what great looks like (the blog posts, the code quality books) that was great. But what has actually transformed code production is giving people the incentive to do the right thing through automatic approvals.

The unsolved problem I worry about is that we may be moving so fast that software architecture drifts into incoherency; you end up with something hard to move, manipulate, or reason about. I’m hedging on the assumption that we’ll soon be able to use agents to identify and solve those drift areas. But honestly, it’s nice to be worrying about higher-level issues rather than whether the code makes sense, or whether we’re producing something below what I’d expect from a senior engineer. That’s a nice problem to have.

The fully loaded cost of a PR

You recently made the point that enterprises thinking narrowly about token spend are underestimating the salary cost per PR. How would you advise other companies to think about the fully loaded cost of a PR?

Think about fixed and variable costs, and the cost of the work being done. There’s a strong parallel to what we saw with Fin. When we launched Fin at $0.99 per resolution, we got pushback and claims from companies that this wasn’t much cheaper than what they could achieve with their own customer support team. But when we measured the all-in cost of a conversation in our own business, accounting for software licenses, management overhead, office space, all the real costs, it was over $60. And that wasn’t even maxed out.

It’s hard to think about this because people don’t often do these full-cost comparisons. But the economics work out so strongly in favor of whatever can produce the highest-quality output without human intervention. If you have a CS agent that resolves at 70% and you’re competing against one at 55%, given the all-in cost of human resolution, the 55% vendor probably can’t give it away for free.

The same is true in our engineering environment. Remove as much human work as possible so you can focus on higher-quality work. People need to compare the throughput, quality, ambition, and lack of rework against the new state with token spend factored in. Token spend matters; we do worry about it, mostly to make sure we’re not being sloppy and to find inefficiency. But it’s incredible: we have an awful and ever-growing bill, and yet the cost per change is dropping. As a business, that’s exactly what you want. A black box you put money into and get features out the other side. We’re getting more features out per dollar, with no signs of that changing. We’re going to keep that flywheel turning so we can put in even more money and get even greater multiples of features out the other side.

Brian is hosting a meetup on Wednesday in London, join here!