Agent Labs: Workload-Harness Fit

Agent engineering vs full-stack training

Upcoming Roundtables in London

April 1st: Tool Use: A breakfast discussion

April 22nd: Evals with OpenAI

April 23rd: Mathematical Superintelligence with Harmonic

A range of agent labs (Cursor, Intercom, Cognition and Decagon) released frontier vertical models in recent months, manifesting one of the many plausible end states for app-layer companies: vertically integrate through model training to reduce dependence on the big lans and protect margins.

The direction of travel was clear once companies like Thinking Machine Labs started abstracting infra primitives, as we covered last October:

Fine-tuning and RL are being productised and abstracted for agent labs to capitalise on their data and distribution advantages.

Just recently Thinking Machine Labs’ Tinker was the latest abstraction for fine-tuning, whilst OpenPipe (acquired by CoreWeave) announced a serverless RL product.

These abstractions pave the way for agent labs to start as API consumers of model labs → capture traces/evals → train narrow models (embeddings, autocomplete, router, policy) before attempting larger training runs with open-source models.

More infra providers have set out to catalyse this market - here’s how Prime Intellect described the vision for their recently released model training offering:

If every company had the same access to frontier training infrastructure, the collective creativity of the market would unlock far more breakthroughs.

We’re already starting to see enterprises and application-layer AI startups realize this. Cursor is beginning to post-train their own models optimized directly for Cursor itself as the RL environment, to gain more sovereignty over their product stack.

We want more application-layer companies entering the training game for every vertical of the economy.

There are two camps at the moment when it comes to how agent labs will focus their technical resources.

One argues that the examples set by coding and customer support will be followed by market leaders in other verticals, with research effort focused on training and updating model weights.

The second camp rails against any training, instead focusing research talent on agent engineering (e.g. context management, tool use, long-horizon tasks and everything else that makes a model production-grade for a task) or building the best ‘harness’.

It depends on the workload.

Workload-Harness Fit

Workloads or tasks vary by volume, value, verification properties and horizon, among other dimensions.

Volume — How many times per day/week does this task execute? This determines both the economic incentive to reduce per-query cost and the rate at which your data flywheel generates training signal. Intercom's 2M weekly conversations sit at one extreme; a boutique consulting firm's quarterly strategic analyses sit at the other.

Value per execution — What's the economic impact of each individual task completion? A customer service deflection might save $3-5. A correct medical diagnosis or a successful code deployment to production might be worth thousands. This determines how much you can invest in model quality per query and how much tolerance there is for error.

Verification Properties - Domains differ fundamentally in how verifiable their reward signals are. Sean Cai outlined three verification properties: veracity (how confident are you the signal is correct?), proliferation (how widely is the signal tracked and available?), and asymmetry (how rare and expensive is the expertise needed to judge correctness?).

Time horizon - How many sequential decisions, tool interactions, and context switches does the task require? A single-turn code completion is low horizon. A multi-file refactoring session with debugging is medium. A multi-week scientific experiment design is extremely high horizon. Longer horizons make both reward attribution and training rollout generation exponentially harder.

Just by looking at workloads through these dimensions, we can start to appreciate how agent labs will focus their research efforts.

Cursor and Cognition’s workloads are high volume, high value (especially as enterprise penetration increases), moderate on verification, and increasingly long time horizon with cloud/background agents.

Given these qualities, pre and post-training with sophisticated multi-dimensional reward engineering was justified. The moderate verification means you need to invest heavily in constructing composite reward signals (correctness + style + efficiency + behavioural penalties). The medium horizons require infrastructure for long rollouts and self-summarisation. LoRA or more efficient forms of fine-tuning is probably insufficient here — Cursor explicitly does full-parameter updates because the bar is just that high when you’re trying to raise the ceiling of software engineering, not the floor.

Intercom and Decagon's workloads are high volume, low to moderate value, clean verification, short to medium horizon. Each execution is low value individually but the aggregate volume is massive, creating strong economic incentive to reduce per-query cost. Verification is clean — the ticket was resolved or it wasn't. Horizons are short — typically single-turn or a few exchanges. The data flywheel spins fast because you get millions of labelled outcomes per month.

The volume justifies the training investment in a full post-training pipeline on a strong base open-source model through inference cost savings alone, let alone the performance improvements that Decagon and Intercom are showing. The clean verification signal makes RL tractable. The short horizons mean rollouts are cheap to generate. This is where the Intercom/Apex playbook works best, and where even LoRA-scale fine-tuning on platforms like Prime Intellect could deliver meaningful gains for smaller players. It’s worth noting Intercom was early to recruit a research team of scientists, ahead of companies like Decagon and Sierra.

Harvey and Legora’s workloads are moderate volume, high value, moderate on verification, medium horizon. Volume is meaningful but not massive (thousands rather than millions of executions per week). Value per execution is high. Verification is moderate — an expert can evaluate quality, but it's expensive and slow. Horizons involve multi-step reasoning but typically within a bounded scope.

The jury is out on what approach is best. Harvey allegedly attempted training runs in the past, whilst Legora has stayed clear of any training and fully focused on building the best harness for Anthropic’s models.

The taxonomy of workloads governs which end markets justify training versus agent engineering. But knowing where you sit on the spectrum is only part of the evaluation, the other half is what it actually costs to execute. Cursor's Composer 2 technical report is the first detailed look at the infrastructure overhead required to operate at the rightmost end of this spectrum. .

The Training Imperative

The training pipeline

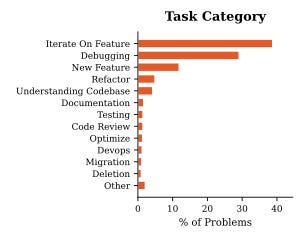

Composer 2’s development follows a two-stage process. The first stage of continued pretraining took an existing open-weight model (Kimi K2.5, a 1 trillion parameter model from Moonshot AI) and trained it further on a massive code-dominated dataset, progressively extending its ability to process longer sequences of code. This stage builds the model’s foundational understanding of programming languages, APIs, and software patterns. The second stage of reinforcement learning is where the model learns how to work as a developer. The model is dropped into realistic coding environments, given tasks derived from actual developer workflows (feature iteration, debugging, refactoring, code review, documentation), and scored on the quality of its end-to-end solutions. Cursor demonstrated empirically that the first stage reliably predicts the success of the second: lower pretraining loss consistently translated to higher RL reward, justifying the investment in pre-taining.

The infrastructure overhead

Composer 2’s training run spanned three GPU regions and four CPU regions globally. The RL phase alone required hundreds of thousands of isolated virtual machines (Firecracker VMs on Cursor’s internal compute platform, Anyrun) to simulate realistic coding environments, each capable of running a full development setup including a browser. These environments needed to be spun up at a rate exceeding 500 pods per second to keep pace with the bursty nature of RL training workloads. Cursor partnered with Fireworks AI for inference during training, running distributed clusters across the US and Europe. The team also wrote custom GPU kernels targeting NVIDIA’s latest Blackwell hardware, including a modified low-precision number format that they found necessary to prevent training instability. The contributor list runs to approximately 50 people! This resembles a global ML infrastructure operation.

Reward engineering and behavioural shaping

Several of Cursor’s most consequential design choices concern how the model is rewarded during RL training, and these reveal the depth of product-informed thinking embedded in the pipeline. Beyond the primary correctness signal, Cursor applies a nonlinear length penalty that penalises the model more steeply for unnecessary effort on simple tasks while tolerating extended reasoning on complex ones, incentivising the model to be fast when it can and thorough when it must. They layer in auxiliary rewards for coding style, communication quality, and product-specific behaviours, including explicit penalties for patterns like creating TODO items and leaving them unfinished. The team actively monitors for emergent problematic behaviours during training and introduces corrective rewards reactively — for instance, when the model began leaving chains-of-thought in code comments or collapsed to using only the terminal tool. The self-summarisation technique, inherited from Composer 1.5, allows the model to chain multiple generations with intermediate summaries within a single training rollout, with the final reward flowing back to upweight good summaries and downweight lossy ones.

Benchmarking philosophy: why CursorBench matters more than public scores Existing public benchmarks are increasingly unreliable indicators of real-world coding agent quality, with four structural problems: domain mismatch (SWE-bench focuses narrowly on bug-fixing), prompt over-specification (public benchmarks assume unnaturally explicit instructions), data contamination (OpenAI suspended SWE-bench Verified reporting after finding models could generate solutions from memory), and narrow evaluation scope (only functional correctness, ignoring code quality, latency, cost, and interactive behaviour). Cursor’s response is CursorBench, an internal evaluation suite built from real coding sessions of their engineering team. The structural contrast is stark: CursorBench tasks require a median of 181 lines of code changes versus 7–10 for SWE-bench, while task prompts are significantly shorter and more ambiguous — mirroring the underspecified nature of real developer requests. The benchmark is continuously refreshed as developer workflows evolve, with each iteration increasing in complexity.

Despite the enormous effort, training Composer 2 was imperative for Cursor.

Developers will pick the best harness for their workloads and Composer 2, a SOTA model at a fraction of the cost, has a chance at winning back both old and new workloads.

Short AGI?

There’s a real risk of obsolescence from large models, but that logic is not too different to saying two companies (or one, if you ask most people) will capture all of the value created by AI in the coming decades.

John Collison asked Bret Taylor half-jokingly whether Sierra is itself premised on a world without AGI:

John: Now we get to it, which is you described building stuff that you know you’re going to throw away because the model capabilities will get there and you’re occasionally... They are developing capabilities that you developed yourself. Isn’t Sierra itself “short AGI?” Sorry, I said I couldn’t resist.

Bret: The short answer is I don’t know. I mean, the fog of war in the software industry is pretty thick right now. I really believe in the applied AI market, though. I think most companies don’t want to buy models or buy software. They want to buy solutions to their problem.

I think there’s so much nuance in how these companies align themselves with different departments at these companies, solve their very unique problems in very specific ways. That is a mix of product, not technology, but product, go-to-market. It’s an ecosystem around it. I think a lot of that still exists because I’m not sure coding the software was necessarily the hard part.

I think the reason for it is most business users want actual solutions to their problems, and they want a company that serves their unique problems in a very specific and bespoke way. I actually am extremely bullish on applied AI. I actually think we could accelerate. I’ll make one statement, which is I think if we paused model development, we’d still have trillions of dollars of economic value.

I think not only am I somewhat skeptical that there will only be two companies in the world, I actually think one of the main things impeding adoption of AI is the lack of existence of all those other companies.

The models are getting better faster than any of us could have ever imagined, with the leaked details of Anthropic’s Mythos suggesting we’re about to experience another step-change improvement in capabilities.

But focus matters, as OpenAI has now admitted.

Startups will create enduring value and technical differentiation in some shape or form will play a role.